According to people familiar with the process, some of the biggest platforms on the web that are used for watching videos have begun to take advantage of automation for removing extremist content from their websites. This is a major step forward for internet giants that are eager for removing any violent propaganda from their platforms and are also under considerable pressure from governments all over the globe as attacks from extremists are rising, from Belgium to Syria to the United States. Facebook and YouTube are the two prominent sites that have begun to deploy systems for blocking or for taking down Islamic State videos rapidly and other similar content.

Originally, this technology had been developed for identifying and removing content from video sites that was copyright-protected. This technology searches for ‘hashes’, which is a kind of unique digital fingerprint that is automatically assigned to specific videos by internet companies. This enables them to remove content that has matching fingerprints. This system would immediately identify attempts of reposting content that has been already regarded as unacceptable, but would not block any content that hasn’t been seen before. The companies didn’t confirm they were using the technology and neither did they explain how they were being employed.

However, people familiar with the technology said that it was possible to check the videos posted against a database of banned content to highlight new postings of content like a lecture encouraging violence or a beheading. The sources didn’t say anything about the amount of human work involved in reviewing videos that are identified as near-matches or matches by the technology. They also didn’t say how the extremist videos in the database were initially highlighted as extremist. It is highly likely that the use of the new technology will be refined with time as the issue is internally discussed by internet companies and also with competitors and other interested third parties.

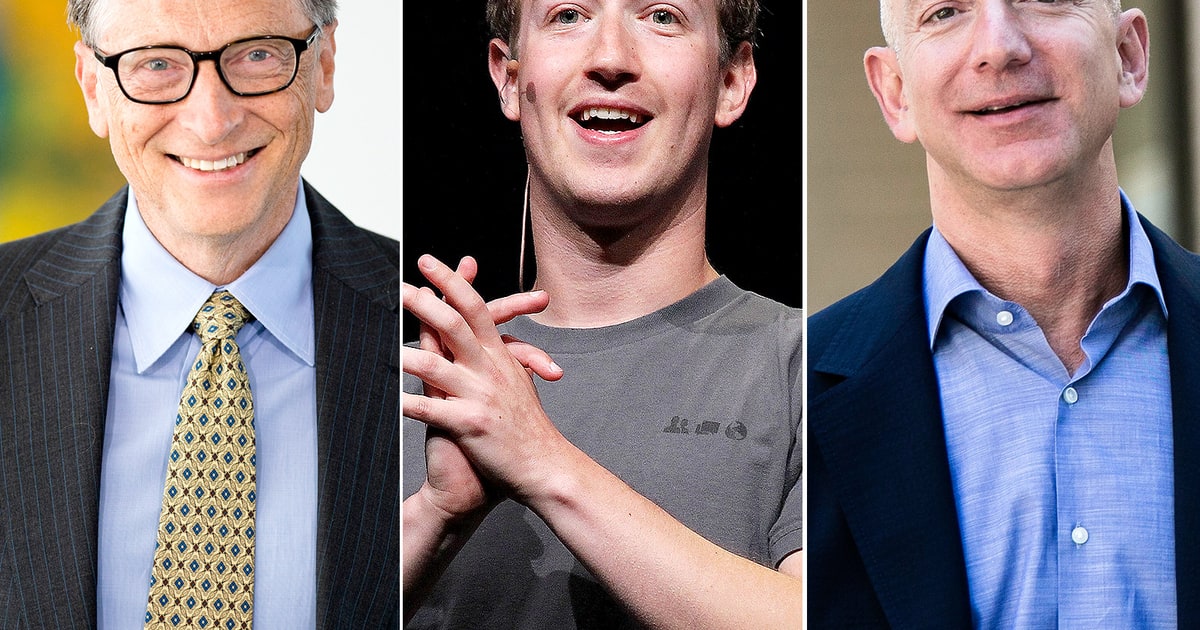

In late April, due to pressure from US President Barrack Obama as well as other European and US leaders who were worried about online radicalization, internet firms such as Twitter Inc., Alphabet Inc.’s YouTube, CloudFlare and Facebook Inc. held a call for discussing their options, which also included a content-blocking system that had been introduced by the private Counter Extremism Project. These discussions highlighted some of the central yet difficult role that some of the most influential companies now play in dealing with issues like free speech, terrorism and the lines between corporate and government authority.

At this point, none of these companies have embraced the system of the anti-extremist group and have been quite wary on any outside input on how their websites should be policed. Experts said that dealing with terrorism is not the same as dealing with other issues such as child pornography and copyright infringement, which are downright illegal. As far as extremist content is concerned, it exists on a spectrum and the line is different for different companies. Until now, most of them have relied on users to flag content that violates their policies.

![Watch Video Now on xiaohongshu.com [以色列Elevatione perfectio X美容仪 perfectio X 全新仪器黑科技了解下]](https://www.techburgeon.com/wp-content/uploads/2019/07/perfectiox-singapore-150x150.jpg)